How to reduce the load?

-

Hi there!

I have very high load in our server because NodeBB traffic. The forum y very low used, 10 - 20 post daily, but spiders and websockets connections between forum and clients, causes high traffic, and trigger a lot of MongoDB queries.

Are there a way to mitigate this, such as web proxies (varnish), etc, without disabling crawler indexing? I see in docs the varnish configuration, but seems that is not cache.

Regards!

Normando -

Are you using nginx to serve static assets?

-

@pitaj said in How to reduce the load?:

Are you using nginx to serve static assets?

Yes. This is my conf

upstream io_nodes { ip_hash; server 127.0.0.1:20000; server 127.0.0.1:20001; } server { listen 192.168.10.10:80; server_name vapeandoargentina.com.ar; return 301 https://$server_name$request_uri; } server { listen 192.168.10.10:443 ssl http2; server_name vapeandoargentina.com.ar; # access_log /var/log/nginx/vapor-access.log; access_log off; error_log /var/log/nginx/vapor-error.log; ssl_certificate /etc/letsencrypt/live/vapeandoargentina.com.ar/fullchain.pem; # managed by Certbot ssl_certificate_key /etc/letsencrypt/live/vapeandoargentina.com.ar/privkey.pem; # managed by Certbot add_header X-Frame-Options "SAMEORIGIN"; add_header X-Download-Options noopen; add_header X-Permitted-Cross-Domain-Policies none; proxy_set_header X-Forwarded-Proto $scheme; proxy_set_header X-Real-IP $remote_addr; proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for; proxy_set_header Host $http_host; proxy_set_header X-NginX-Proxy true; proxy_redirect off; # Socket.io Support proxy_http_version 1.1; proxy_set_header Upgrade $http_upgrade; proxy_set_header Connection "upgrade"; proxy_buffers 4 256k; proxy_buffer_size 128k; proxy_busy_buffers_size 256k; proxy_read_timeout 180s; proxy_send_timeout 180s; gzip on; gzip_min_length 1000; gzip_proxied off; gzip_types text/plain application/xml application/x-javascript text/css application/json; location @nodebb { proxy_pass http://io_nodes; #proxy_pass http://127.0.0.1:6081; } location ~ ^/assets/(.*) { root /opt/vapor/nodebb/; try_files /build/public/$1 /public/$1 @nodebb; } location /plugins/ { root /opt/vapor/nodebb/build/public/; try_files $uri @nodebb; } location / { proxy_pass http://io_nodes; #proxy_pass http://127.0.0.1:6081; } } -

@normando Hmm.. curious... how many worker processes and connections?

nginx -T| grep -i worker_connect nginx: the configuration file /etc/nginx/nginx.conf syntax is ok nginx: configuration file /etc/nginx/nginx.conf test is successful worker_connections 1024; [root@forums nginx]# nginx -T| grep -i worker_process nginx: the configuration file /etc/nginx/nginx.conf syntax is ok nginx: configuration file /etc/nginx/nginx.conf test is successful worker_processes auto;I am not all that familiar with nginx's proxy tunables. And some of above differs from my set up. Be that as it may, why not spin up a couple more nodebb nodes, i.e. 20002 and 20003? You've only got two. Just to see what happens whilst yer' testing anyways, eh?

Beyond tweakin' and tunin' nginx et.al. you are effectively experiencing a low level denial of service. So you much take steps to mitigate at the problem source. Maybe some adaptive firewall rulesets? Maybe a WAF? Maybe Fail2Ban?

Have fun! o/

-

@gotwf I will be fun with DoS

Anyway, seems crawler bots.

Yes, I get the same as you;

# nginx -T| grep -i worker_connect nginx: the configuration file /etc/nginx/nginx.conf syntax is ok nginx: configuration file /etc/nginx/nginx.conf test is successful worker_connections 1024; # nginx -T| grep -i worker_process nginx: the configuration file /etc/nginx/nginx.conf syntax is ok nginx: configuration file /etc/nginx/nginx.conf test is successful worker_processes auto;I will test increasing nodes, but still thinking how to cache the output.

Thanks -

@normando Wondering if this will help. I use caching over at https://sudonix.com and it's set as follows

server { listen x.x.x.x; listen [::]:80; server_name www.sudonix.com sudonix.com; return 301 https://sudonix.com$request_uri; access_log /var/log/virtualmin/sudonix.com_access_log; error_log /var/log/virtualmin/sudonix.com_error_log; } server { listen x.x.x.x:443 ssl http2; server_name www.sudonix.com; ssl_certificate /home/sudonix/ssl.combined; ssl_certificate_key /home/sudonix/ssl.key; return 301 https://sudonix.com$request_uri; access_log /var/log/virtualmin/sudonix.com_access_log; error_log /var/log/virtualmin/sudonix.com_error_log; } server { server_name sudonix.com; listen x.x.x.x:443 ssl http2; access_log /var/log/virtualmin/sudonix.com_access_log; error_log /var/log/virtualmin/sudonix.com_error_log; proxy_set_header X-Real-IP $remote_addr; proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for; proxy_set_header X-Forwarded-Proto https; proxy_set_header Host $http_host; proxy_set_header X-NginX-Proxy true; proxy_redirect off; # Socket.io Support proxy_http_version 1.1; proxy_set_header Upgrade $http_upgrade; proxy_set_header Connection "upgrade"; gzip on; gzip_min_length 1000; gzip_proxied off; gzip_types text/plain application/xml text/javascript application/javascript application/x-javascript text/css application/json; location @nodebb { proxy_pass http://127.0.0.1:4567; } location ~ ^/assets/(.*) { root /home/sudonix/nodebb/; try_files /build/public/$1 /public/$1 @nodebb; add_header Cache-Control "max-age=31536000"; } location /plugins/ { root /home/sudonix/nodebb/build/public/; try_files $uri @nodebb; add_header Cache-Control "max-age=31536000"; } location / { proxy_pass http://127.0.0.1:4567; } add_header X-XSS-Protection "1; mode=block"; add_header X-Download-Options "noopen" always; add_header Content-Security-Policy "upgrade-insecure-requests" always; add_header Referrer-Policy 'no-referrer' always; add_header Permissions-Policy "accelerometer=(), camera=(), geolocation=(), gyroscope=(), magnetometer=(), microphone=(), payment=(), usb=()" always; add_header X-Powered-By "Sudonix" always; add_header Access-Control-Allow-Origin "https://sudonix.com https://blog.sudonix.com" always; add_header X-Permitted-Cross-Domain-Policies "none" always; fastcgi_split_path_info ^(.+\.php)(/.+)$; ssl_certificate /home/sudonix/ssl.combined; ssl_certificate_key /home/sudonix/ssl.key; rewrite https://sudonix.com/$1 break; if ($scheme = http) { rewrite ^/(?!.well-known)(.*) https://sudonix.com/$1 break; } } -

@normando said in How to reduce the load?:

Anyway, seems crawler bots.

Do you have the logs? Is it primarily googlebot? It will come in like a thundering herd of thunderin' hoovers. Does not respect conventions, e.g. robots.txt, but rather requires you to sign up for their webmaster tools. Anyways, back to the relevance, if not, then googlebot will basically scale to suck up as much as it can as fast as it possibly can. Hence, if you are running NodeBB on a VM in some reasonably well connected data center, network bandwidth is unlikely to be the bottleneck, leaving the vm itself.

The googlebot comes in fast, but also leaves fast. Several times a day, at +/- regular intervals depending on site activity and its mystery algos. So if you are seeing more off the wall bots, at random times 'twixt visits, then maybe these bots warrant further investigation. Otherwise, not much you can do about goog bot. But maybe give it more resources and it can leave sooner.

Have fun!

-

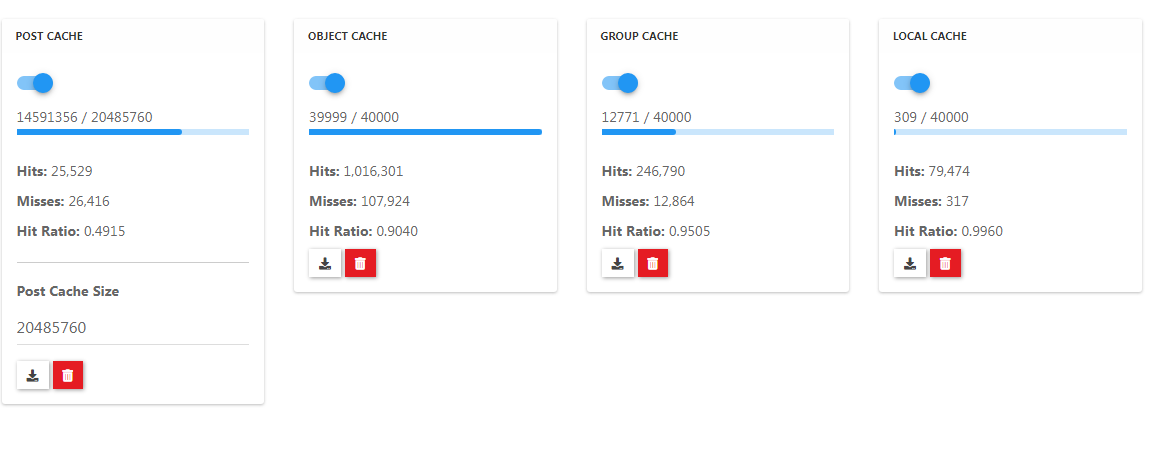

Another quick thought: What is ACP->Advanced->Cache telling you about your hit ratios, eh?

Have fun!

-

-

@phenomlab said in How to reduce the load?:

@julian said in How to reduce the load?:

HTTP 420 Enhance Your Calm

Lenina Huxley....And that made me think of Brave New World, which I realize wasn't your intention, but now I want to read that book again...

used as a rate-limiting mechanism.

used as a rate-limiting mechanism.