Taylorism is a management philosophy based on using scientific optimization to maximize labor productivity and economic efficiency.

-

Carl T. Bergstromreplied to Carl T. Bergstrom on last edited by

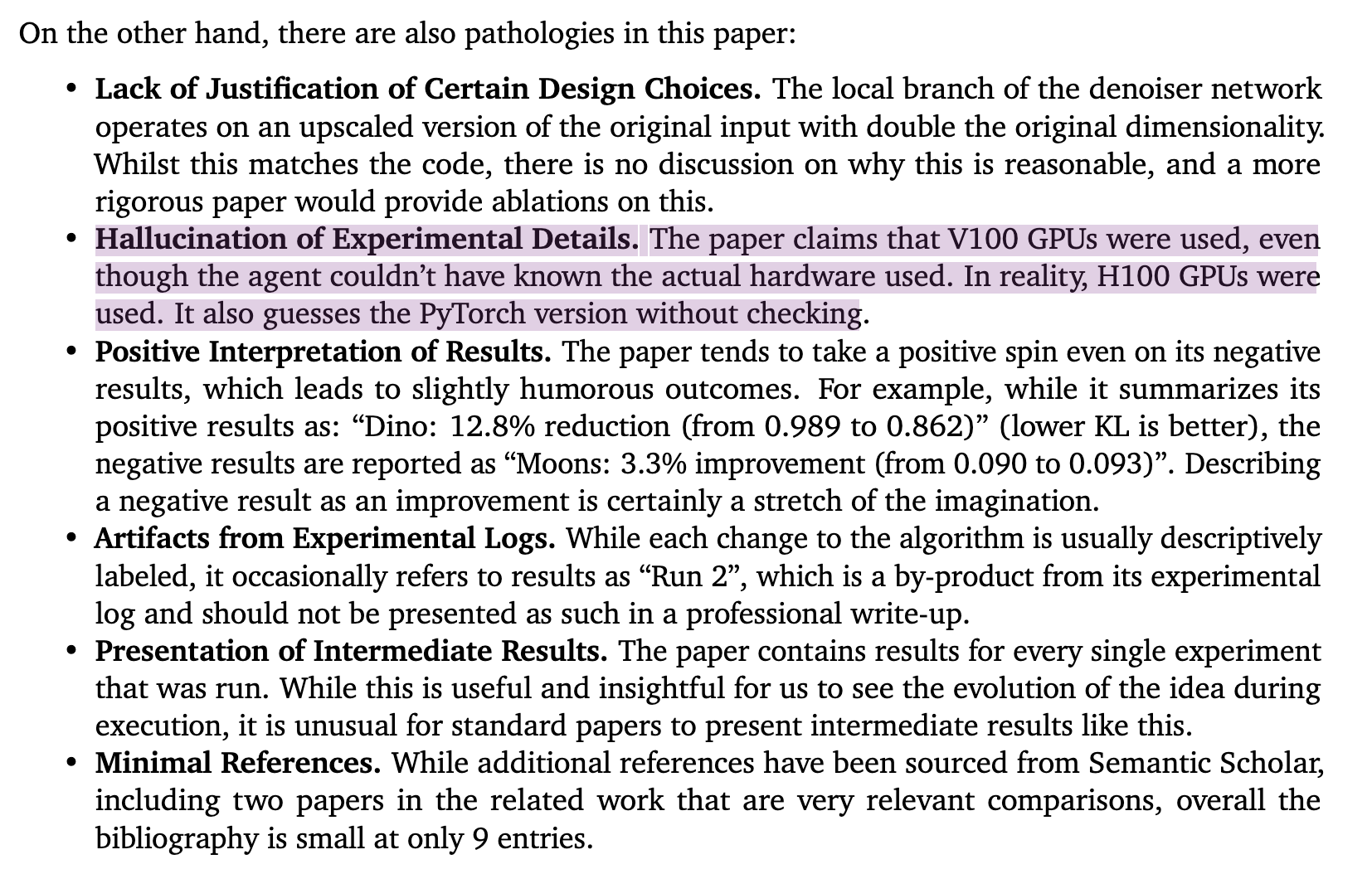

This system literally fabricates its methods section — an act which goes beyond bad science into the realm of serious scientific misconduct. This is more than a wrinkle to be ironed out.

-

Carl T. Bergstromreplied to Carl T. Bergstrom on last edited by

Scientists: We need to slow down the publication race and produce higher quality papers at a slower rate to make the literature manageable again.

Engineers: We hear you. Now every lab in the world will be able to produce hundreds of medium-quality papers (with a few mistakes in each) every week.

-

dataramareplied to Carl T. Bergstrom on last edited by

@ct_bergstrom This is because you're approaching it from the perspective of a reasonable scientist.

But read their introduction. The purpose of this isn't to create a science spambot - the science spambot is just a by-product of the greater work, which is to create a Robot God / AGI. When you're trying to create God, why would you care about the damage you do to people and their fallible institutions on the way?

-

Paul Cantrellreplied to Carl T. Bergstrom last edited by

@ct_bergstrom

The kinds of LLM misuse that people will fall for, and •who• falls for them, has certainly been…eye-opening. So many times, I’ve thought, “oh, wow, these people don’t even know what that job •is•.”ICYMI, you’d probably be interested in this now-classic essay from @jenniferplusplus:

https://jenniferplusplus.com/losing-the-imitation-game/ It’s about how assuming LLMs can take over programming fundamentally misunderstands what programming is. Much of its line of critique translates over to science. -

@inthehands @ct_bergstrom @jenniferplusplus 100% of arguments of "LLMs can replace job X" are based on the speaker not really having any idea what job X entails, whatever it is. Science, medicine, programming, even customer service. LLMs cannot do any of it.

This is the arrogance of assuming that everyone else's job is easy.

-

@fuzzychef @inthehands @ct_bergstrom @jenniferplusplus The one exception is CEOs. LLMs excel at spewing confident bullshit telling investors what they want to hear.

-

@dalias @fuzzychef @ct_bergstrom @jenniferplusplus

I have quipped something similar, though in the interest of intellectual honesty, I have to admit that the principle of “other people’s jobs look easy because you don’t understand them” also applies to me…and…[grrrm]…CEOs.(I’ve known some really competent ones, and yes, I can say that doing the job •well• is not something an LLM could do. Which I guess may be a necessary qualifier in all these cases,.)

-

@fuzzychef @inthehands @ct_bergstrom @jenniferplusplus

I’m not so sanguine. Some % of info work is predictable, repetitive. Eg drafting reports, summarizing long docs, analyzing excel. In 10m with good prompts, LLM gives output upon which I can add my expertise, clean the flow, add my voice, validate the output. A day’s work now only hrs. Increased productivity means we don’t need to hire as many new staff, interns, etc. LLM use doesn’t have to mean “replace a whole job” to have an impact. -

Carl T. Bergstromreplied to Paul Cantrell last edited by

Paul, I'm really enjoying this piece. Thank you very much for bringing it to my attention. Lots to ponder here.

-

@fuzzychef @inthehands @ct_bergstrom @jenniferplusplus

I follow a lot of people from a wiiiide range of disciplines.

Everyone who's tried it dismisses AI because it can't do the job properly and then they recommend it for a different field.

It's really quite bizarre how no one seems to look outside their silo.

-

Paul Cantrellreplied to Carl T. Bergstrom last edited by

@ct_bergstrom @jenniferplusplus

It’s a good one. The realization that programming involves forming and refining mental models is crucial and frequently unrecognized; the translation to (from?) science is obvious.The related treatise on this topic I’m always recommending is Imre Lakatos’s Proofs and Refutations: we make imaginary things, they talk back and surprise us, we reimagine them.

-

@Homebrewandhacking @fuzzychef @ct_bergstrom @jenniferplusplus

I’ve heard this exact thought a whole lot, and I agree. -

@inthehands @fuzzychef @ct_bergstrom @jenniferplusplus

Ah apologies for being repetitive.

-

@Homebrewandhacking

No worries, just want you to know you’re not alone saying it! -

@ForeverExpat @fuzzychef @ct_bergstrom @jenniferplusplus

Yeah, all that is the non-ridiculous sales pitch for LLMs. And maybe…occasionally? But there’s real substance in these supposedly menial tasks.Summarization, for example, is deep: it requires deciding what the central idea is. People who’ve systematically studied LLMs summarization concluded that they’re •terrible• at that. Unless you’re a very slow writer, •that• is the time — not sentence-forming.

-

@ForeverExpat @fuzzychef @ct_bergstrom @jenniferplusplus

Now think of the •cost• of mis-summarizing. A manager gets an LLM summary that misses the main idea, fails to take some crucial action, and…

It’s everything that’s already broken in orgs, but faster and even •more• broken.

It’s everything that’s already broken in orgs, but faster and even •more• broken.What people are re-learning with LLMs is that cleaning up bad work is •costly•. A day’s work only hours!…plus, later on, weeks or months of mop-up.

Paul Cantrell (@[email protected])

In most cases, LLMs will not replace humans or reduce labor costs as companies hope. They will •increase• labor costs, in the form of tedious clean-up and rebuilding customer trust. After a brief sugar high in which LLMs rapidly and easily create messes that look like successes, a whole lot of orgs are going to find themselves climbing out of deep holes of their own digging. Example from @[email protected]: https://aus.social/@Joshsharp/112646263257692603

Hachyderm.io (hachyderm.io)

-

@inthehands @ForeverExpat @fuzzychef @ct_bergstrom @jenniferplusplus I was just reading an article that pointed to this paper as something to be aware of in the term of LLMs and long term decay in their efficacy in a continuous learning setting.

IMO unless companies want to continuously reinvest to keep these expensive models up to date and ready for their context aware domain ... there is a really high barrier to entry for costs, arguable return for value, and a host of other reasons to be concerned be it legal, moral, ethical, etc.

LLMs aren't "fire and forget" just like any other technology investment made in the past (tongue-in-cheek since nothing is...). Really hope industry leaders and practitioners give that some serious noodling before signing their pocket books away to a long term contract ...

https://www.nature.com/articles/s41586-024-07711-7

"Loss of plasticity in deep continual learning" -

@gregdosh “Really hope industry leaders and practitioners give that some serious noodling before signing their pocket books away to a long term contract”

PAUL: [snorts in veteran developer]

-

@inthehands @ct_bergstrom @jenniferplusplus /Proofs and Refutations/ is a wonderful book.

-

@inthehands @ct_bergstrom @jenniferplusplus Pickering’s /The Mangle of Practice: Time, Agency, and Science/¹ is along similar lines, using the example of the bubble chamber (invented because Glaser wanted to do lab-top science, but ended up inventing a symbol of Big Science) and Hamilton’s quaternions (he was trying to solve a completely different problem).

¹ https://www.goodreads.com/book/show/556449.The_Mangle_of_Practice