I'm seeing new rounds of posts on whether #AI communicates or truly uses language.

-

@dingemansemark @davidschlangen

but, beyond that, current LLMs can do more than just operate with conventional meaning, no?

see this example with Ollama I just ran

I think this is obviously itself only a small fraction of (full on Herb Clark like) negotiated meaning, but it is accepting and integrating a new word I made up in the conversation....

-

@UlrikeHahn

i think it is more precise to say they *don't* operate with meaning (conventional or otherwise), they just deal in tokens we happen to be able to assign meaning towe are doing the heavy lifting here

as long as our prompt plus its own output fit the context size, it'll happily parrot our neologisms back at us (and yes I use that metaphor advisedly: I think it is apt in this context)

FWIW I think RLHF is the star here in packaging it all in the most obliging way possible

-

@dingemansemark but they don't just 'deal in tokens'- asking ChatGPT4o to identify a table in an image or asking it to draw a table is, at the end of the day, an action in the external environment, however ChatGPT4o happens to go about producing it, no?

and, of course we are doing most of the lifting, but that's also true of any single speaker vis a vis a linguistic community.

I didn't personally invent any of the words I typed out in this response, nor could I single-handedly change them.

-

@UlrikeHahn

the heavy lifting done by members of a community of practice is in large part reciprocal; in the funhouse mirror of LLMs, it's really all youhow does the response to the prompt come to be "an action in the external environment"? which environment, precisely?

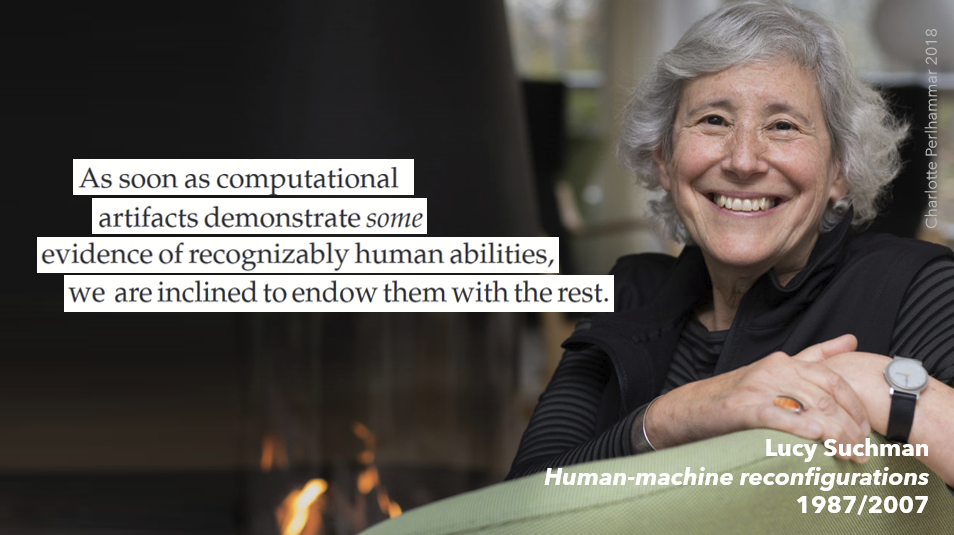

I don't understand the impulse to ascribe agency to an LLM and what seems to be a certain eagerness to obscure the processes of human sense-making and facilitation at work (see Lucy Suchman's Human/Machine Reconfigurations)

-

@dingemansemark drawing a red bounding box on an image or producing an image is 'an action in the external environment'.

And I am not 'eager to ascribe agency' to LLMs, I want to understand how they do or do not relate to a family of inter-related concepts ('meaning', 'communication', 'agency', 'mental state', 'belief', 'computation', etc.) because I'm a cognitive scientist and those are foundational concepts of the work I've been pursuing for many years.

That seems a modest goal, no?

-

@UlrikeHahn

but why then, as a cognitive scientist, ignore all the material contributions & human labour involved in making it seem so? I can't summarise Suchman in a toot but trust me she's worth readingit seems to me a serious overreach to call whatever digital operations an LLM carries out when prompted (provided with material, text or otherwise) an 'action in the external environment' (why "the"? "external" how?). That is my ground for saying I see an eagerness to ascribe agency

-

Mark Dingemansereplied to Mark Dingemanse last edited by

@UlrikeHahn (I mean on one level I know why: cognitive science has for a long time been able to mostly ignore those material conditions & processes of situated action, choosing to privilege computations, representations, mental states, beliefs. Here too Suchman is an excellent starting point: her 1987 critique of the then-standard Schank & Abelson 'cognitive' account of planning is a model for the kind of move that we need, imo, if we want to think clearly about what LLMs are and what they do)

-

@UlrikeHahn @dingemansemark @davidschlangen One thing that occurs to me is that the required premise could be supported by the view that (speaker-) meaning something requires having intentions directed at the mental states of other speakers, and that that condition isn't met by AI.

That wouldn't be question begging, and it has a history in philosophy of language. I don't know whether people are in fact motivated by that view.

-

@twsh @dingemansemark @davidschlangen I agree fully. But, personally, I see one line for push back that is important to me (long before LLMs). I feel there is a wider tendency in thinking about language that is sufficiently prevalent that it deserves a name, so I’ll call it the ‘in-every-instance fallacy’. It’s the conflation between what are fundamental and necessary features of language with the idea that these features have to be present in *each and every token case* 1/8

-

@twsh @dingemansemark @davidschlangen 4/8 grammatical sentences of English), but precisely because language involves a conventional system I see no reason for ‘communication’ and ‘understanding’ to feature equally in each token case. In the same way that I find it natural to say that “penguin” means PENGUIN regardless of whether I as an individual speaker can pick out penguins in the world, I find it more natural to say that randomly assembled fridge magnets that happen to spell out

-

@twsh @dingemansemark @davidschlangen *as a whole* is grounded, and that can be achieved by grounding directly only some parts (so I was always going to be partial to @dcm’s paper in the related thread here https://social.sunet.se/@dcm/113075064787214273)

I personally see the same fallacy tripping up this exchange. I have no doubt whatsoever that understanding language in general requires starting from communication and understanding (and not, as did Chomskyian linguistics from the point of language as the set 3/8

-

@twsh @dingemansemark @davidschlangen 2/8 I think that’s a fallacy because it fails to do justice to the likewise essential feature of language that it involves a *conventional system* -and both parts of that phrase matter. One example of this fallacy is the confusion between the idea that meanings need to ultimately be grounded (true) and the erroneous belief that this implies each word *individually* needs grounding, when, in fact, what matters is that the system of interconnected meanings

-

@twsh @dingemansemark @davidschlangen 5/8 “my life is empty” are language and mean something even though they do not constitute an “utterance”. I think it’s a fundamental feature of conventional systems that aspects can be derivative of one another (ie piggy back features). Well beyond just the fridge magnet, I don’t think all utterances (as a matter of fact) equally involve ‘commitments’ or ‘intentions’ , ‘shared negotiation of meaning’, or ‘aiming toward others mental states’

-

@dingemansemark 2/5 I don’t think a meaningful account without that as a starting point is even possible. But I do feel you are over-estimating its precise role. Take as an example, your earlier reply that “the heavy lifting done by members of a community of practice is in large part reciprocal; in the funhouse mirror of LLMs, it's really all you”. I think with respect to establishing conventional meaning that assumes more than is necessary. Words have fuzzy boundaries, we disagree

-

@dingemansemark Mark, I will definitely try to read her, but I don’t think I ignore the user side “labour”. To me, the contortion is in denying that drawing a table when prompted, and drawing a different one when asked for another are “actions”. And I think that has consequences with respect to whether I assign “meaning”.

I genuinely don’t want an account of language (or LLMs) that doesn’t rest on a rich notion of communication and interaction. 1/5