I just deleted 1,372 disks from Google cloud and 7 project spaces.

-

@vwbusguy @arichtman @mttaggart unless youre dealing with like, dozens or hundreds of containers that are geographically distributed, i get the impression kubernetes is just massive overhead and lots of extra attack surface. I can see how in narrow circumstances it can be useful, but so far literally every single k8s deployment ive seen is "way more overhead and complexity and attack surface, for not enough benefit"

-

@Viss @vwbusguy @arichtman I believe this is generally correct. The scale at which its utility becomes apparent will never be achieved by the vast majority of those who use it. The choice was informed by hype and a desire to believe they would one day require, as K8s puts it, "planetary scale."

-

@Viss @arichtman @mttaggart Again, I agree with you that this is true for a lot of use cases and shops. That said, you can't pretend that things were gloriously secure en masse in the older days of LAMP, Tomcat, and ASPX. Moving to Kubernetes in some cases allowed for better hygiene in general around secrets, hardening, and idempotency. For stuff like multi-tenant JupyterHub, Kubernetes is highly practical. For serving your company's blog - maybe not.

Project Jupyter

A multi-user version of the notebook designed for companies, classrooms and research labs

(jupyter.org)

-

@vwbusguy @arichtman @mttaggart thats the tug though. everyones hamfisting it in everywhere, using it for their core business infra or making it part of ci/cd pipelines. nobody is using it 'the right way'

-

Scott Williams 🐧replied to Taggart :donor: last edited by

@mttaggart @Viss @arichtman This was definitely true in some shops. I actually remember hearing a Red Hat person advising a customer once, roughly nine years or so ago, that what they wanted OpenShift for could be done better on some regular machines running RHEL. I admired the honesty and restraint from oversell in that particular moment.

-

Scott Williams 🐧replied to Viss last edited by [email protected]

@Viss @arichtman @mttaggart CI/CD pipelines makes sense - not having designated hardware sit idle when workers aren't running, the worker agents can go away when the job is done leaving only intended artifacts meaning less attack vector for workers, idempotency, etc.

Of course you don't *have* to do it this way, but there's a clear case to be made.

-

@vwbusguy @arichtman @mttaggart that description is not how i have seen it deployed, though

-

@Viss @arichtman @mttaggart That's how I have it deployed

. All on prem with Jenkins and Rancher RKE2 k8s backends.

. All on prem with Jenkins and Rancher RKE2 k8s backends. -

Taggart :donor:replied to Scott Williams 🐧 last edited by

@vwbusguy @Viss @arichtman This conversation is quite the piece of evidence that you are the exception to the rule. Your knowledge is impressive, and rare. Certainly moreso than orchestrated container deployments. Y'all are both right.

-

@mttaggart @vwbusguy @arichtman this is just the 2024 version of

- there is a 'way to do it right'

- most people do not do it that way

- the thing is almost certainly being used when it doesnt need to be

- the folks deploying the thing in most cases are not familiar enough with it, or architecture in general to adquately harden it

-- or they just dont care to, usually because complianceit used to be lamp, now its containers

-

Scott Williams 🐧replied to Taggart :donor: last edited by [email protected]

@mttaggart @Viss @arichtman We didn't even get into immutable Linux hosts yet, either

And to be clear, I also think Viss is right. Where we've disagreed here, I'm also agreeing with him at least somewhat.

-

@mttaggart @vwbusguy @arichtman i guess the tl;dr for me is:

"if you give people a giant red george jetson button that does a thing, then people will just instinctively mash that button without ever considering the consequences. and you end up with a bunch of output that the button masher wasnt expecting and doesnt know what to do with, which often times ends up as someone elses problem, who wont be happy with this arrangement"

-

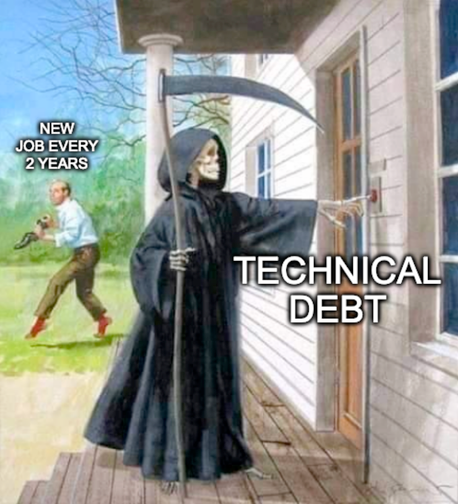

@Viss @mttaggart @arichtman I think it often happens more like this:

-

@vwbusguy @mttaggart @arichtman nailed it. but now with k8s you can cloud scale that debt at warp factor 9

-

@vwbusguy @mttaggart @arichtman just like java was 'write once, exploit everywhere', now you can take "architectural and technical misconfigurations and lack of hardening and cloud scale it"

-

@Viss @vwbusguy @arichtman I really do think a giant piece of it—especially in the tech industry/startup space itself—is a decision-making process that assumes:

- Old == bad

- We will be the next 1M user unicorn and should build for that today.

-

Scott Williams 🐧replied to Taggart :donor: last edited by

1. Containers are old. They're basically jails and Solaris had containers in the 1990s.

2. Getting this right is a tricky problem. Arguably one viable reason *to* use public cloud is that you don't expect to scale big soon, so the cost to do so could be relatively low in OpX dollars. -

Scott Williams 🐧replied to Scott Williams 🐧 last edited by [email protected]

@mttaggart @Viss @arichtman The "magic" about containers in either direction tends to go away once you realize that containers are just Linux processes. That's all they are - wrapped in cgroups namespaces and with link hijacking like a jail. That's why when you run `ps` on Linux you see the actual container process and not a hypervisor, etc. Requests and limits? That's CFS.

-

Taggart :donor:replied to Scott Williams 🐧 last edited by

@vwbusguy @Viss @arichtman While the concept of containers is old, I think we can both agree that the "productization" of them is less so.

And as far as scale, I'm referring specifically to choosing a container orchestrator as the deployment target from day one.

-

Scott Williams 🐧replied to Taggart :donor: last edited by [email protected]

@mttaggart @Viss @arichtman Nope - Solaris did it first and *very* commercially.

Solaris ContainersMenu

With Oracle Solaris Containers you can maintain the one-application-per-server deployment model while simultaneously sharing hardware resources.

(www.oracle.com)