I just deleted 1,372 disks from Google cloud and 7 project spaces.

-

@Viss @mttaggart @arichtman I'm guessing this was meant as a reply for a different thread? That said, having experienced something similar before myself, this is solid advice.

-

Scott Williams 🐧replied to Scott Williams 🐧 last edited by

@Viss @mttaggart @arichtman Reading the Phoenix Project was both highly therapeutic but also very difficult because it made me remember fresh some of those experiences.

-

@arichtman @Viss @mttaggart Oh, there's ways to do it, such as SOPS or a number of vault vendors that manage secrets or do runtime injection (currently using Infisical, but that's not an endorsement).

Manage Kubernetes secrets with SOPS

Manage Kubernetes secrets with SOPS, OpenPGP, Age and Cloud KMS.

(fluxcd.io)

-

@vwbusguy @arichtman @mttaggart ive been telling my customers to handle secrets entirely out of band from ci/cd. and to basically have them deployed in a way where they are more or less hard-coded and encoded somehow, so they dont end up in text files on disk or in env vars

-

Scott Williams 🐧replied to Scott Williams 🐧 last edited by

@arichtman @Viss @mttaggart Yes, k8s secrets could be better, but they're still an improvement over the past days where people hard coded credentials into their git repos assuming they'd never be public and/or thought they'd be safe just because they were compiled into a jar or war file and no one would dare peak inside, right?

-

Scott Williams 🐧replied to Viss last edited by [email protected]

@Viss @arichtman @mttaggart This just tells me you didn't have the wonderful joy of trying to run Docker Swarm in production in its early days and I'm happy for you in that regard. Sweet glory did Kubernetes solve a lot of problems compared to that.

-

@vwbusguy @Viss @mttaggart SOPS only handles encryption in at rest in repo afaict, nothing runtime and only applicable to pure gitops. Out of band secrets still involves non-core controllers or features and often they have to create core `secret` resources which defeats most of the point of having the secrets secured externally - unless I'm missing something...

-

@vwbusguy @arichtman @mttaggart this feels like one of those sorta 'if you go back further in time, you see that docker actually introduced a lot of problems, which were then fixed by k8s' scenario, so if your context window begins at docker, then yeah its a 'measurable improvement', but if it begins 'before you installed docker', then you're still at a net negative

-

Scott Williams 🐧replied to Scott Williams 🐧 last edited by [email protected]

@Viss @arichtman @mttaggart I literally wrote my own CNI before I realized what I was doing just trying to get a reliable network service that I could proxy between containers with it. It constantly called etcd to splice in config updates to nginx with regex on changes on each of the hosts with logic to proxy sub paths, etc.

-

Scott Williams 🐧replied to Scott Williams 🐧 last edited by

@Viss @arichtman @mttaggart I never published it because it felt so dirty and I didn't want to maintain it and it was a problem I didn't have to solve with Kubernetes.

-

@arichtman @Viss @mttaggart I was there for the 1.0 release of Kubernetes and that's a very reductionistic take on it. This was before Google was full blown bad guy and I'm sure they had business reasons, but Kubernetes solved problems that Mesos and Swarm struggled to solve. Mesos was badly fragmented with Twitter basically having its own fork. It knew how to scale big but was a struggle at small. Swarm had the opposite problem. Controlled by one company and didn't scale well.

-

Scott Williams 🐧replied to Scott Williams 🐧 last edited by

@arichtman @Viss @mttaggart Keep in mind doing so created a lot of competition for them. This was back when Red Hat openshift was an awkward Heroku PaaS competitor. It was great publicity for GKE, to be sure, but it wasn't without significant realized risk to open source it.

-

@arichtman @Viss @mttaggart I liked cilium in concept because you could eliminate the need for kube-proxy, but it also comes with some foot-rake opportunities and quirks in places that other CNIs didn't.

-

@arichtman @Viss @mttaggart Layer2/3 routing with MetalLB is very easy and simple to setup, but you need proper BGP for routing beyond that.

If you already have established network infra then you can use multus with network bridging at the host level and use more traditional sys admin networking tools to do it, using vlans, ippools, dhcp, etc.

-

Scott Williams 🐧replied to Viss last edited by [email protected]

@Viss @arichtman @mttaggart I utilize env injection via encrypted vaults in a CI context, but so the same code is deployed in production but with different credentials and endpoints and none of those credentials living in git, container images, etc. - the dev, staging, production model and all.

The Twelve-Factor App

A methodology for building modern, scalable, maintainable software-as-a-service apps.

(12factor.net)

-

Scott Williams 🐧replied to Ariel last edited by [email protected]

@arichtman @Viss @mttaggart Depending on your Kubernetes distro, that is largely true. Openshift and Rancher include optional operators for it.

The one Rancher ships is based on vals and supports sops.

GitHub - digitalis-io/vals-operator: Kubernetes Operator to sync secrets between different secret backends and Kubernetes

Kubernetes Operator to sync secrets between different secret backends and Kubernetes - digitalis-io/vals-operator

GitHub (github.com)

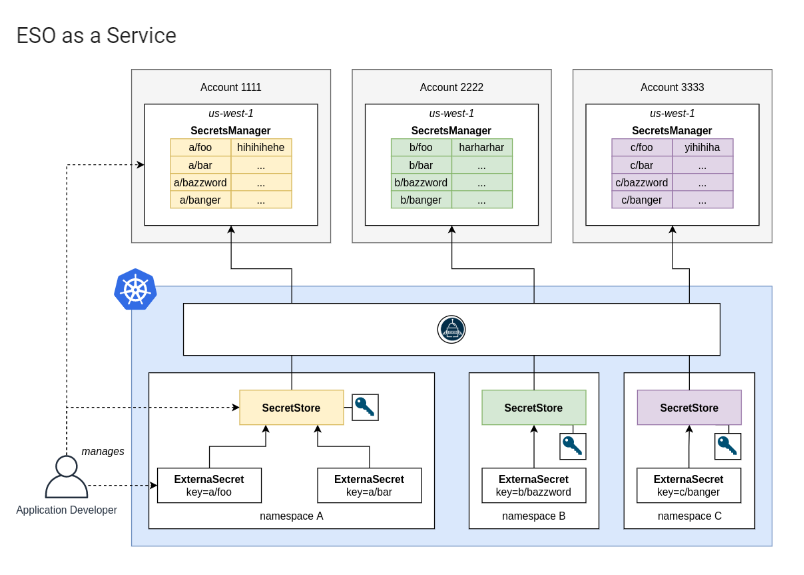

Red Hat's is ESO:

How to Setup External Secrets Operator (ESO) as a Service

This guide makes an attempt to show one of the many methods we can utilize to store "sensitive" data in an external secrets management system such as AWS Secrets Manager, retrieve that data via the ESO and have them stored in Kubernetes secrets for applications to use.

(www.redhat.com)

-

Scott Williams 🐧replied to Scott Williams 🐧 last edited by

@arichtman @Viss @mttaggart The short of it is, if you care about Kubernetes security, you're going to pay up for Rancher or Openshift support. Rancher RKE2 was originally written specifically around FIPS compliance for government vendors and expanded the customer base from there.

-

@Viss @arichtman @mttaggart To be fair, you're not wrong for a whole lot of use cases. If you built your empire on a LAMP stack, that doesn't translate well in a scalable way in a Kubernetes world because it was stateful and built for vertical scaling. Forcing that into Kubernetes means retooling some core architectural things for the stack for an outcome that might not be demonstrably better.

-

@vwbusguy @arichtman @mttaggart unless youre dealing with like, dozens or hundreds of containers that are geographically distributed, i get the impression kubernetes is just massive overhead and lots of extra attack surface. I can see how in narrow circumstances it can be useful, but so far literally every single k8s deployment ive seen is "way more overhead and complexity and attack surface, for not enough benefit"

-

@Viss @vwbusguy @arichtman I believe this is generally correct. The scale at which its utility becomes apparent will never be achieved by the vast majority of those who use it. The choice was informed by hype and a desire to believe they would one day require, as K8s puts it, "planetary scale."