I'm seeing new rounds of posts on whether #AI communicates or truly uses language.

-

@dingemansemark sorry, that's all really ill-formed and muddled, but that's precisely why I'd love the debate.

Also, I'm not sure the two points don't matter specifically in combination (multi-modality *and RLHF). Maybe it would help to start from the other end. What *else* is there shaping human communication than some kind of grounding and interactional constraints?

-

@UlrikeHahn but what NLP calls multimodality (a heap of text:image labels) is also incomparable to the rich, embodied situational grounding seen in human-human interaction — I see not a difference in degree but in kind

the RLHF nozzle obscures the fundamental differences by making LLMs behavior superficially more human-like, which is why I find it interesting (and why users find LLMs compelling)

-

@dingemansemark thanks Mark! I think my problem here is the same as it always is with embodied views of cognition. I'm totally sympathetic, but ultimately I feel they never fully deliver the goods and end up feeling question begging to me.

So, yes, human-human interaction is richer, and that's somewhere you can draw the line, but *why there*?

Why not view the LLM case as proto-communication? WHY does 'richness' as a notion with a continuum cross a *qualitative* threshold for you? 1/2

-

@dingemansemark 2/2 or to put it differently, do you think we communicate with dogs who learn words? do they communicate with us? Does Kanzi exhibit linguistic communication?

what is your view on those, and how are they the same or different to LLMs?

-

@UlrikeHahn

yes, we communicate with dogs and other animals.i don't care much for policing the boundaries of language (see https://www.jbe-platform.com/content/journals/10.1075/avt.00095.ras )

LLMs are just a fundamentally different kind of entity: not evolved, not precarious, not autopoietic, not self-sustaining, not self-organizing — all things we share with dogs and many other beings we coexist with

some elements of what they do may seem similar, how they do it is not, as argued here:

https://direct.mit.edu/opmi/article/doi/10.1162/opmi_a_00160/124234/The-Limitations-of-Large-Language-Models-for -

@dingemansemark will read, but just to clarify, nothing about our exchange is (to me) about 'policing the boundaries of language. It's about the underlying intuitions.

see the link to my blog post earlier in the thread which is precisely about why drawing boundaries for the sake of it is not a useful endeavour.

We're already on the same page with that.

-

@dingemansemark and yes, I agree that "LLMs are just a fundamentally different kind of entity: not evolved, not precarious, not autopoietic, not self-sustaining, not self-organizing", but what I am (still) missing is the explicit connection of that to 'communication' or embodied meaning.

-

@UlrikeHahn how could an entity like that ever 'mean' or 'communicate' anything except in the eye of the (autopoietic, etc) beholder? to me it feels like a category mistake on the order of saying a funhouse mirror is capable of insulting you

-

@dingemansemark because standard accounts of 'meaning' haven't actually expicitly posited an additional clause: "and btw this definition applies only to auto-poetic, evolutionarily formed agents"

it doesn't mean they shouldn't, it's just that I don't think they have

see also this unfolding thread:

Ulrike Hahn (@[email protected])

Do #LLMs have mental states? on standard definitions in philosophy a state or event is a "mental state" if and only if it is a conscious state or an intentional state I assume LLMs aren't conscious but how is a currently active representation that mediates ChatGPT4o drawing a table, identifying a table in an image, or answering a query about tables *not* an intentional state in the sense of this definition, i.e. something that has 'intentionality' in the sense of 'aboutness'? @[email protected]

FediScience.org (fediscience.org)

-

@UlrikeHahn you may be right about 'standard' accounts (which?) though I think any account that includes notions like speaker's meaning, intention, commitment (=most of them since at least Malinowski and Firth a century ago) are pretty clear on the intersubjective nature of meaning (see Bender & Koller or @davidschlangen on this)

I still don't see more than a funhouse mirror that enables us to see, at best, ourselves in a new light

-

@dingemansemark @davidschlangen

Mark, I think the issue is that historically those accounts haven't really needed to specify what counts as "a speaker" in the first place.

We can now sneak in "only autopoetic systems that are a product of evolution can qualify as speakers" but we haven't done that in the past (because we didn't have to), and it remains (to me) question begging to just assert it as a condition now.

and I don't think the appeal to inter-subjectively negotiated meaning 1/2

-

@dingemansemark @davidschlangen 2/2 is sufficient to establish that. Yes, there is an important part of meaning that is negotiating (and creating) meaning in context. But there are also parts that aren't and that are parasitic on language as a conventional system.

Just ruling those aspects of meaning out doesn't work for me, see there-

"Stochastic parrot" is a misleading metaphor for LLMs

Metaphors are hugely important both to how we think about things and how we structure debate, as a long research tradition within cogniti...

UlrikeHahn (write.as)

-

@dingemansemark @davidschlangen

but, beyond that, current LLMs can do more than just operate with conventional meaning, no?

see this example with Ollama I just ran

I think this is obviously itself only a small fraction of (full on Herb Clark like) negotiated meaning, but it is accepting and integrating a new word I made up in the conversation....

-

@UlrikeHahn

i think it is more precise to say they *don't* operate with meaning (conventional or otherwise), they just deal in tokens we happen to be able to assign meaning towe are doing the heavy lifting here

as long as our prompt plus its own output fit the context size, it'll happily parrot our neologisms back at us (and yes I use that metaphor advisedly: I think it is apt in this context)

FWIW I think RLHF is the star here in packaging it all in the most obliging way possible

-

@dingemansemark but they don't just 'deal in tokens'- asking ChatGPT4o to identify a table in an image or asking it to draw a table is, at the end of the day, an action in the external environment, however ChatGPT4o happens to go about producing it, no?

and, of course we are doing most of the lifting, but that's also true of any single speaker vis a vis a linguistic community.

I didn't personally invent any of the words I typed out in this response, nor could I single-handedly change them.

-

@UlrikeHahn

the heavy lifting done by members of a community of practice is in large part reciprocal; in the funhouse mirror of LLMs, it's really all youhow does the response to the prompt come to be "an action in the external environment"? which environment, precisely?

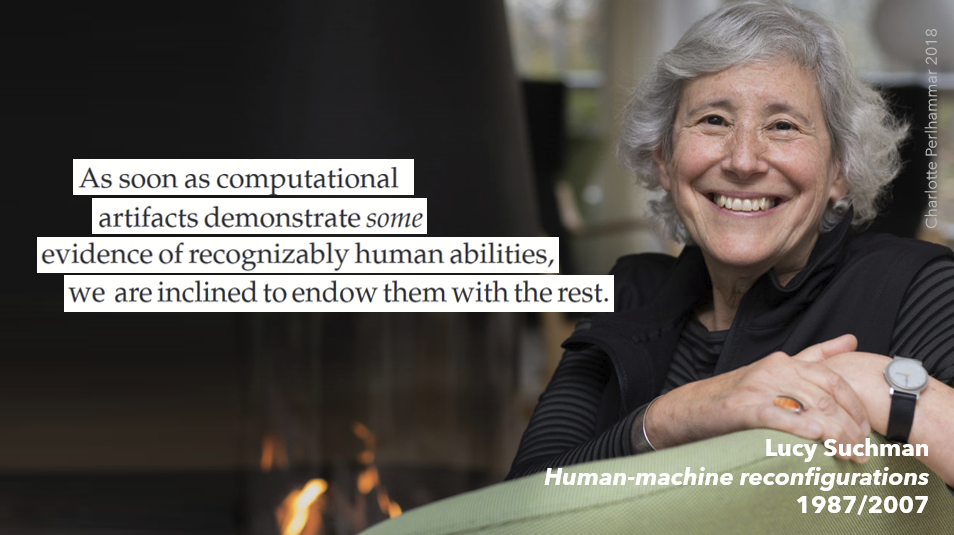

I don't understand the impulse to ascribe agency to an LLM and what seems to be a certain eagerness to obscure the processes of human sense-making and facilitation at work (see Lucy Suchman's Human/Machine Reconfigurations)

-

@dingemansemark drawing a red bounding box on an image or producing an image is 'an action in the external environment'.

And I am not 'eager to ascribe agency' to LLMs, I want to understand how they do or do not relate to a family of inter-related concepts ('meaning', 'communication', 'agency', 'mental state', 'belief', 'computation', etc.) because I'm a cognitive scientist and those are foundational concepts of the work I've been pursuing for many years.

That seems a modest goal, no?

-

@UlrikeHahn

but why then, as a cognitive scientist, ignore all the material contributions & human labour involved in making it seem so? I can't summarise Suchman in a toot but trust me she's worth readingit seems to me a serious overreach to call whatever digital operations an LLM carries out when prompted (provided with material, text or otherwise) an 'action in the external environment' (why "the"? "external" how?). That is my ground for saying I see an eagerness to ascribe agency

-

Mark Dingemansereplied to Mark Dingemanse last edited by

@UlrikeHahn (I mean on one level I know why: cognitive science has for a long time been able to mostly ignore those material conditions & processes of situated action, choosing to privilege computations, representations, mental states, beliefs. Here too Suchman is an excellent starting point: her 1987 critique of the then-standard Schank & Abelson 'cognitive' account of planning is a model for the kind of move that we need, imo, if we want to think clearly about what LLMs are and what they do)

-

@UlrikeHahn @dingemansemark @davidschlangen One thing that occurs to me is that the required premise could be supported by the view that (speaker-) meaning something requires having intentions directed at the mental states of other speakers, and that that condition isn't met by AI.

That wouldn't be question begging, and it has a history in philosophy of language. I don't know whether people are in fact motivated by that view.